15 Questions to Ask Before Outsourcing Your District Data Stack

- Published on: March 19, 2026

- Updated on: March 26, 2026

- Reading Time: 8 mins

-

Views

Outsourcing Your District Data Stack Can Be Relief or Chaos

What the District Data Stack Actually Includes

How to Use the Managed Data Services Evaluation Scorecard

Non-Negotiable Areas

Negotiable Areas

15 Questions to Evaluate Managed Data Services

A) Data Ownership and Exit Strategy (Avoid Vendor Lock-In)

1. Who Owns the Data, Transformation Logic, and Integration Code?

2. If the District Ends the Contract, What Exactly Is Returned?

3. How Does the Provider Prevent Operational Dependency on a Single Team?

B) Security, Privacy, and Auditability

4. Can access be restricted at the field level using role-based permissions?

5. What audit logs exist, and how long are they retained?

6. How is sensitive data protected, and what is the incident response process?

C) Integration Resilience and Change Management

7. How Are Schema or API Changes Detected?

8. What Integration Patterns Are Used

9. How are identities matched across systems

D) Data Quality and Reporting Readiness

10. What Automated Validation Rules Run Nightly?

11. How Is Data Health Measured Over Time?

12. How Are Reporting Definitions Governed?

E) Operations, Support, and Implementation Reality

13. How Are Reporting Definitions Governed

14. What Implementation Approach Is Used?

15. What Training and Documentation Are Included?

The Proof Pack to Request Before Signing

Pilot Acceptance Tests: Validate Before You Commit

Managed Data Services Evaluation Comes down to Trust and Proof

FAQs

District systems, from assessment to administration, continue to expand every year. Each new platform generates its own data streams, which adds to the information that districts must manage.

At this scale, technology teams must oversee data moving across many systems. As a result, district teams spend significant time stitching systems together and monitoring the pipelines that move data between them.

Facing these pressures, some districts explore managed data services to help run their data infrastructure. At the same time, district leaders must ensure that outsourcing data management does not reduce pipeline visibility or weaken district control over critical data systems.

Outsourcing Your District Data Stack Can Be Relief or Chaos

When districts consider district data stack outsourcing, the goal is usually operational stability. Leaders want reporting systems that work reliably.

But districts are not outsourcing dashboards. They are outsourcing infrastructure that moves, validates, and secures data across systems. That infrastructure often becomes visible only when operational issues appear:

- Late-night data extracts used to repair reporting gaps

- Manual reconciliation between platforms

- Integrations that break when vendors update APIs

- Delays in compliance or state reporting workflows

- Technology teams stretched thin by ongoing maintenance

For many districts, outsourcing begins as a way to stabilize these operational pressures. Some districts are managing increasingly complex digital ecosystems while operating under constrained resources.

At the same time, staffing pressure across schools remains significant. Research reports that more than 60% of educators experience high levels of burnout, often linked to workload and operational demands.

In this environment, outsourcing parts of the data stack can feel like relief. But without a structured managed data services evaluation, it can also introduce a different problem: long-term vendor lock-in and reduced visibility into how district data systems operate.

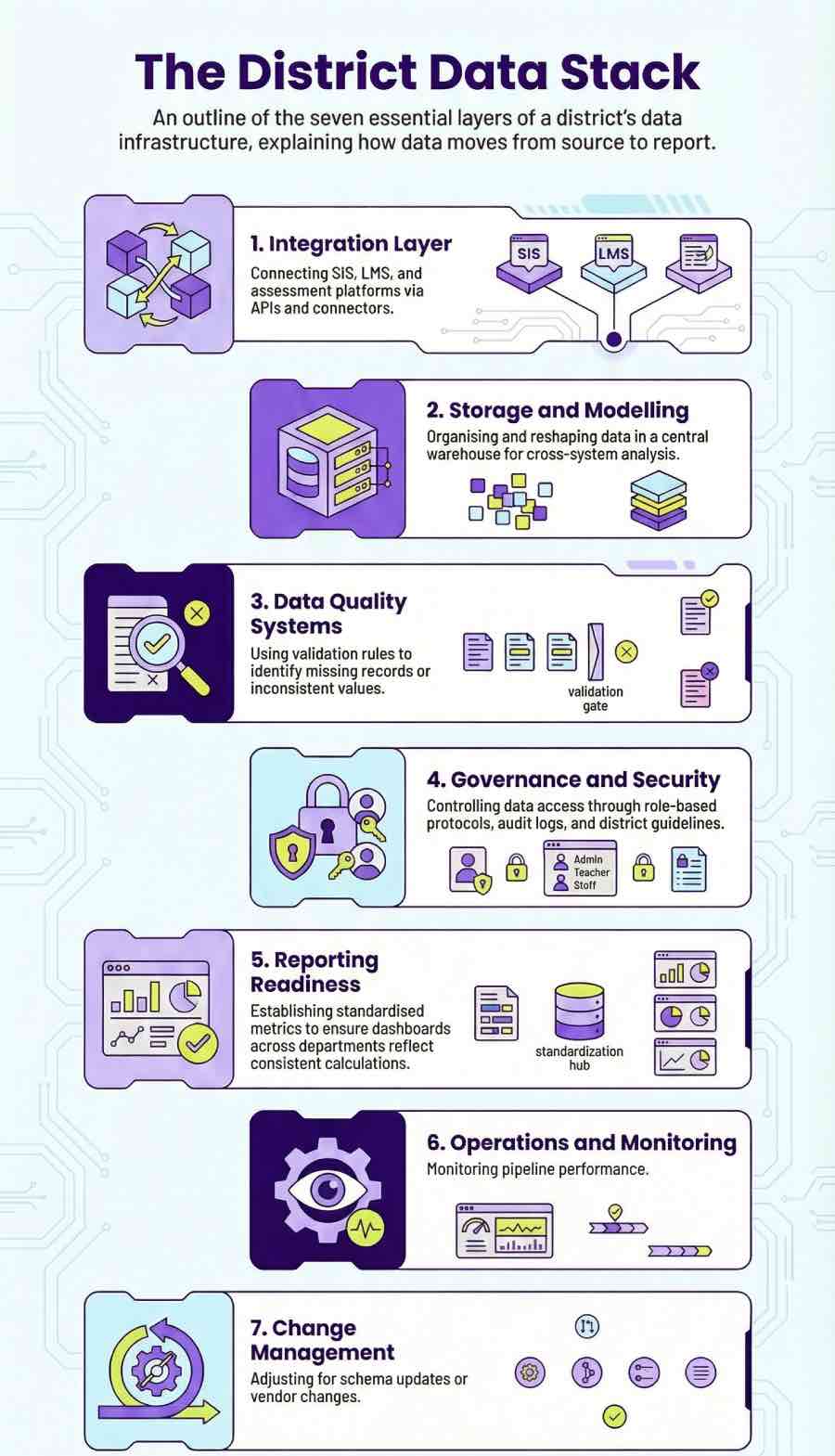

What the District Data Stack Actually Includes

Before evaluating vendors, district leaders need a clear view of what sits behind the district data stack. In most districts, several layers work together to move, store, check, and secure data across systems.

- Integration Layer: This is where data first moves between platforms. APIs and connectors link systems such as student information systems (SISs), learning management systems, assessment platforms, rostering tools, and identity services.

- Storage and Modeling: Once collected, the data is stored in a warehouse or lakehouse. Here it is organized and reshaped so different systems can be analyzed together.

- Data Quality Systems: District data rarely arrives perfectly aligned. Validation rules and monitoring checks help identify missing records, inconsistent values, or mismatched identifiers before the information reaches reports.

- Governance and Security: District policies determine how data can be accessed and used. These controls typically include role-based access control, audit logs, and broader data governance guidelines.

- Reporting Readiness: Different departments often use the same data for different purposes. Shared definitions and standardized metrics ensure that dashboards and reports reflect the same calculations.

- Operations and Monitoring: Behind every data pipeline is ongoing maintenance. Monitoring tools track pipeline performance, trigger alerts when jobs fail, and support structured incident response when issues appear.

- Change Management: Digital systems evolve constantly. Schema updates, vendor changes, or API modifications can break pipelines unless there are processes in place to detect and adjust for those changes.

Understanding these layers helps district leaders see what is actually being outsourced when a managed provider takes responsibility for the data environment.

How to Use the Managed Data Services Evaluation Scorecard

Vendor demos can make a platform look polished. How the platform behaves once it becomes part of everyday district operations is another question. A vendor comparison scorecard can help district teams review the providers. Each provider can be rated from 1 to 5 across the questions that follow. Some areas should carry more weight than others.

Non-Negotiable Areas

- Data ownership of district records, transformation logic, and integration pipelines

- Security and privacy controls that protect student data across systems

- Governance transparency, including how access policies and changes are managed

- Exit readiness, ensuring the district can retrieve its data and move providers if needed

Negotiable Areas

- Dashboard features used for viewing analytics

- Reporting interfaces that present district metrics

- Visualization tools that support different reporting styles

These scores should always be supported with evidence. District teams may request artifacts such as system screenshots, audit logs, export samples, or operational runbooks that show how pipelines are maintained in practice. Using a structured managed data services checklist helps districts conduct a more grounded data stack contract evaluation.

A Vendor Comparison Scorecard: 15 Questions to Evaluate Managed Data Services

The following framework organizes 15 questions across five categories that matter most when conducting a managed data services evaluation.

A) Data Ownership and Exit Strategy (Avoid Vendor Lock-In)

1. Who Owns the Data, Transformation Logic, and Integration Code?

Evidence to Request: Contract language that describes data ownership and export examples for pipelines and transformations

Risks to Flag: Proprietary formats that prevent exporting district data, and clauses that mention shared ownership.

2. If the District Ends the Contract, What Exactly Is Returned?

Evidence to Request: A documented exit checklist covering data exports, pipeline configurations, and transformation mappings.

Risks to Flag: Undefined timelines or unclear commitments around what assets will be returned.

3. How Does the Provider Prevent Operational Dependency on a Single Team?

Evidence to Request: Runbooks describing pipeline operations, onboarding documentation, and training materials that explain how integrations and reporting pipelines are maintained.

Risks to Flag: Undocumented processes or operational knowledge held only by the vendor’s engineering team.

B) Security, Privacy, and Auditability

4. Can Access Be Restricted at the Field Level Using Role-Based Permissions?

Evidence to Request: Demonstrations of role-based access control, including examples where sensitive fields are masked or restricted by user role.

Risks to Flag: Permission models limited to broad user categories without field-level restrictions.

5. What Audit Logs Exist, and How Long Are They Retained?

Evidence to Request: Example audit logs showing user access and system changes, along with the log retention policy used by the provider.

Risks to Flag: Logging that only captures limited activity or retention periods that are too short for investigation.

6. How Is Sensitive Data Protected, and What Is the Incident Response Process?

Evidence to Request: Documentation describing encryption methods for stored and transmitted data, along with the provider’s incident response playbook.

Risks to Flag: Missing breach response procedures or unclear roles during a security event.

C) Integration Resilience and Change Management

7. How Are Schema or API Changes Detected?

Evidence to Request: Monitoring alerts or logs that show when upstream systems change their schema or API behavior.

Risks to Flag: Integration failures only discovered after pipelines stop running.

8. What Integration Patterns Are Used, and Are Mappings Documented?

Evidence to Request: Integration documentation that outlines how records move between the SIS, LMS, and assessment systems.

Risks to Flag: Data mappings that exist only inside vendor code without documentation.

9. How Are Identities Matched Across Systems?

Evidence to Request: Identity matching rules and examples of how student or staff conflicts are resolved.

Risks to Flag: Silent merges that overwrite existing records.

D) Data Quality and Reporting Readiness

10. What Automated Validation Rules Run Nightly?

Evidence to Request: Sample validation rules used to detect missing records, duplicate identifiers, or schema mismatches.

Risks to Flag: Checks that only verify pipeline completion without reviewing data quality.

11. How Is Data Health Measured Over Time?

Evidence to Request: Dashboards or reports that track pipeline runs and highlight missing or delayed data loads.

Risks to Flag: Monitoring that only confirms jobs ran successfully.

12. How Are Reporting Definitions Governed?

Evidence to Request: A documented registry of approved metrics and the process used to update them.

Risks to Flag: Multiple dashboards calculating the same metric differently.

E) Operations, Support, and Implementation Reality

13. What Do Day-to-Day Operations Look Like?

Evidence to Request: Examples of monitoring dashboards, support SLAs, and escalation paths used when pipelines fail.

Risks to Flag: No clear ownership once the system is live, or support handled only through ad-hoc tickets.

14. What Implementation Approach Is Used?

Evidence to Request: A rollout plan that shows phases, testing checkpoints, and defined acceptance testing before production use.

Risks to Flag: Large “big bang” deployments with little validation before the system goes live.

15. What Training and Documentation Are Included?

Evidence to Request: Onboarding guides, operational documentation, and examples of training materials provided to district staff.

Risks to Flag: One-time onboarding sessions with no ongoing documentation or enablement.

Districts should also assess whether providers can support ongoing platform development, system changes, and new analytics initiatives.

What to Request Before Signing

During vendor discussions, district teams often ask for a few real examples of how the environment actually operates.

Typical materials include:

- Raw and modeled data exports from the warehouse

- Validation rule sets are used to check incoming data

- Operational runbooks that describe pipeline maintenance

- Incident reports showing how failures were handled

- Internal system documentation

- Data dictionaries used by reporting teams

Looking through these files usually tells district leaders far more than a demo. The details reveal how pipelines are maintained, how issues are handled, and how transparent the environment really is.

Pilot Acceptance Tests: Validate Before You Commit

Some districts also run a short pilot before committing to a full deployment. The idea is simple: observe how the platform behaves under normal district workloads. Common checks include things like verifying nightly refresh schedules, confirming validation rules catch known errors, or reproducing an existing district report using the new data environment.

Security is often reviewed at this stage as well. Teams may confirm that role-based permissions behave the way policies require. Before starting the pilot, districts usually define clear walk-away conditions. If the environment cannot meet those expectations, the project can stop before a full rollout begins.

Managed Data Services Evaluation Comes Down to Trust and Proof

Outsourcing the data stack is becoming routine in many districts. The real challenge is deciding which partner can operate that environment without reducing transparency.

A carefully managed data services evaluation helps leaders focus on operational evidence rather than product claims. Documentation, testing, and clear governance practices often reveal more than a sales presentation.

When districts keep visibility into how data moves, how pipelines run, and how changes are managed, outsourcing does not mean giving up control. It simply means the infrastructure is being run by a different team.

FAQs

Look at how the provider runs the environment. Review data ownership terms, integration reliability, security controls, and the documentation behind the pipelines.

Keep ownership of the data and request export access to datasets, pipelines, and transformation logic. Clear documentation and open formats make it easier to move systems later.

Ask for operational artifacts such as runbooks, sample audit logs, validation rules, and system documentation. These show how the environment operates.

The acceptance testing in managed data outsourcing is a short pilot where the district tests integrations, data refresh schedules, and reporting logic before committing to a full rollout.

Get In Touch

Reach out to our team with your question and our representatives will get back to you within 24 working hours.